Dealing with Super Intelligence

A summary of Vitalik Buterin's blog post about d/acc and super intelligence.

Hello, Hypers!

Today I want to make a slightly different post. I want to make a summary and review of a recent blog post. It is not an Under the Hype post. It is a post from the co-founder of Ethereum, Vitalik Buterin.

This specific article, titled “My Techno-Optimism“, is about the balance between technology acceleration and human safety, and it is specially focused on AGI development. More precisely, it is about the potential risks of Super Intelligence and how to minimize those risks.

But before diving into the article, I would like to tell you why I think Vitalik is worth hearing (and reading). Also, I will add my opinion and viewpoints in the following summary. So this issue of Under the Hype won’t be too different after all.

Why Paying Attention to Vitalik?

He’s not just another rich guy with sufficient initiative and luck to earn millions from a tech startup. First of all, Ethereum is not exactly a startup. On the other hand, Vitalik's net worth is nothing compared to the tech big fishes.

But more importantly, Vitalik is a truly tech guy. He’s not a businessman who believes his fortune is comparable to his wisdom. He has been actively developing a technology based on the principles of open source and decentralization. One of the most successful open-source projects in the short history of the movement.

He has witnessed the evolution of this big collective effort we call Ethereum. His articles and social media posts always show deep tech knowledge. They also show his sober and fact-backed viewpoints about the future of technology.

If this has not convinced you to take a closer look at what Vitalik Buterin says. I invite you to read this summary of one of his latest articles about AI, Super Intelligence, and techno-optimism.

Note: I’m not saying I always agree with Vitalik. I’m just saying he is an interesting person and I like to read what he writes.

The Intentional Effort

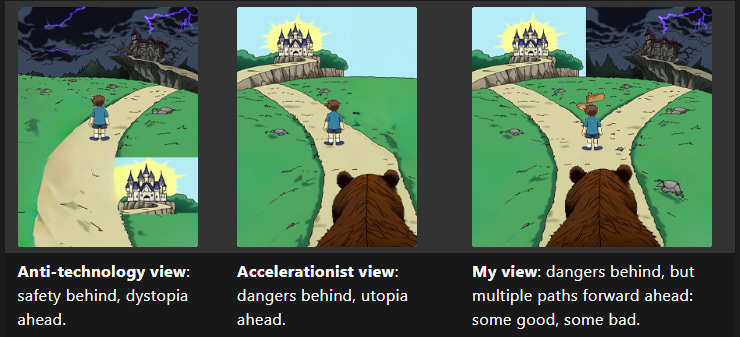

The first part of the article classifies the sentiment of humanity towards technology. After the last big breakthroughs in AI, we have seen how the media is flooded with these sentiments. Vitalik classifies them into three categories:

Anti-Tech: We were safe and now we are heading to disaster.

Accelerationist: We’ll face many dangers if we stop developing technology and we’ll get to a bright future if we continue tech development.

Alternative (Vitalik’s view): We’ll face many dangers if we stop developing technology. We can certainly get to a bright future, but we can also make mistakes that lead us to a dystopian future.

Figure taken from the original article

The second viewpoint of the previous list is what the e/acc (effective accelerationist) movement would defend. It is based on some strong evidence from the past. For example, life expectancy has been steadily increasing over the last century (except for some specific periods like World Wars). Today almost all the information we need is in our pockets and global poverty is dropping at a fast rate.

Evidence about how technology has improved our world is everywhere. But there is one exception: climate change. Although even the worst-case scenario won’t cause human extinction, it would surely cause the death of millions of humans. Much more deaths than in any previous war in history.

However, there are precedents of climate crises solved by the collective effort of a large number of people without renouncing technology development (references in the original article). Also, we are making big progress in achieving clean and sustainable energy generation.

But the thing is that these solutions have not come automatically as a consequence of an economic system or technology evolution. Instead, we had to intentionally make a turn toward those solutions. In Vitalik’s words:

Often, it really is the case that version N of our civilization's technology causes a problem, and version N+1 fixes it. However, this does not happen automatically, and requires intentional human effort.

I find this observation quite interesting since sometimes we tend to think that capitalism or a market-first society would eventually come to a solution whenever the incentives are big enough for people to work on that solution. And to be honest, I don’t find the evidence provided in the article to completely reject this allegation. What we have witnessed in the last years is that governments and individuals are still moved by their interests and benefits. When climate change started to harm those benefits, then the proposals to solve it have started to be implemented. The agreements between nations to mitigate climate change can be seen as a result of the pressures and claims of some of those nations on behalf of their interests.

To summarize, the point of the article here is that we need an intentional effort to take the path to a bright future and avoid the path to dystopia.

AI is a New Type of Danger

Vitalik says that AI is a different technology, in the sense that it is about creating a new mind that is rapidly gaining intelligence with the past of time. It is not a technology that can change the global order but a technology that can potentially take over humans as the more advanced “species” of our planet.

Another thing that sets AI apart from other technology is the magnitude of the risk it implies. Although a nuclear war or climate change can wipe out a significant part of humanity, there would be isles of survivors. In the case of Super Intelligence that turns against us, we are talking about human extinction.

About the point of AI turning against us, there is a lot of material about the possible misalignment between AI and human goals. So I won’t cover them here. In the original article, you can find a summary of those opinions.

Instead, I’d like to talk about the alternative future of a world with Artificial Super Intelligence. As Vitalik says, it seems unlikely that this Super Intelligence was not in charge of this world. In this situation, humans would be pets of the Super Intelligence and would lose the power to shape their own future.

Despite the probabilities of reaching these seemingly extreme points in our evolution. I agree with Vitalik that this is not the world I (or my descendants) would like to live in.

So, what is the alternative?

The d/acc Idea

As Vitalik, I am sympathetic to the e/acc movement in a lot of contexts. There are many cases in which delaying or stopping technology could be disastrous.

"20 people dead in a medical experiment gone wrong is a tragedy, but 200000 people dead from life-saving treatments being delayed is a statistic".

But there are other contexts in which we should be more cautious. For example, military technology. Being in favor of an acceleration in military technology means believing that the owners of that technology are going to use it for good. Vitalik’s question makes the dilemma more clear:

… military technology is good because military technology is being built and controlled by America and America is good. Does being an e/acc require being an America maximalist, betting everything on both the government's present and future morals and the country's future success?

History has shown us that giving too much power to a small group of people usually has disastrous consequences. But it is not a surprise we still insist on this approach time after time. It is a way easier approach. Decentralization is hard to achieve when we are dealing with hard problems. That’s why all armies have a strict centralized structure. But Vitalik proposes an alternative philosophy:

This philosophy also goes quite a bit broader than AI, and I would argue that it applies well even in worlds where AI risk concerns turn out to be largely unfounded. I will refer to this philosophy by the name of d/acc.

The "d" here can stand for many things; particularly, defense, decentralization, democracy and differential.

Defensive vs. Offensive

We can split technology into two categories: defensive and offensive. Some technologies make it easier to attack others […]. Others make it easier to defend and even defend without reliance on large centralized actors.

Vitalik states that a defense-favoring world is a better world:

First of course is the direct benefit of safety: fewer people die, less economic value gets destroyed, less time is wasted on conflict. What is less appreciated though is that a defense-favoring world makes it easier for healthier, more open and more freedom-respecting forms of governance to thrive.

He says that an obvious example of this is Switzerland. I won’t go into detail here. But the idea is that centralization is necessary when we need to react to dangers. Centralization allows for faster and more coordinated responses. But if defensive technology is advanced enough, we could rely on it to protect us and move to more decentralized forms of governance.

There are more examples in the original article.

The core idea is to promote and focus on defensive technology first. For example, we need to focus on vaccines first and on virus experiments later. If every person in the world had access to locally manufactured and verifiable open-source vaccines or other prophylactics, then we wouldn’t need to rely on government management during a potential pandemic event.

To give a more detailed description of how this defense-favoring world would be, Vitalik classifies defensive technologies into four categories: macro, micro, cyber, and info. I won’t explain here each one of them but I strongly recommend you read about them in the original article. Mainly because the explanations provide many examples of how this hypothetical world would be.

Defense Against the Machine

Now we are going to talk about the defensive solutions for AI. Of course, there is an “easy path“: creating a small organization that takes care of all AGI advancements. But there is evidence that this is not what people want. Also, I think the point of this article is to try to find more decentralized solutions.

Another proposal is to make sure there’s lots of people and companies developing lots of AIs, so that none of them grows far more powerful than the other. This reminds me nuclear weapons race that prevents any powerful country from attacking others because the costs of a war would be unaffordable.

But as technology becomes more accessible each day and its uses and consequences are far less predictable, it is not clear how this approach can be reproduced in the AI research field. The experience of Vitalik developing Ethereum has also shown that this kind of “polytheism“ could be an inherently unstable equilibrium. So, what is the best solution then?

There is a third alternative:

… focus less on AI as something separate from humans, and more on tools that enhance human cognition rather than replacing it.

The idea is to create AI tools that are aimed at assisting us instead of replacing us. For example, an AI version of Photoshop in which an artist can collaborate with the AI to create a final product based on a real-time feedback process.

I think the feedback part is key. In my opinion, the critical difference between an assistive technology and an autonomous one is the necessity of human feedback to perform well.

But how to force every research group in the world to work on assistive AI instead of trying to create a Super Autonomous Intelligence? We just can’t.

Be ready! We are near the end but now things get crazy.

The solution would be to be ready to fight back. When we say Super Intelligence, we are talking about a form of intelligence that can think a thousand times faster than we can. No combination of humans using tools with their hands is going to be able to hold its own against that.

For Vitalik, the first natural step is creating brain-computer interfaces. We are now getting used to the natural language interface between humans and AIs. One of the great advantages of this interface is that the communication loop (AI result → human feedback → AI result) lasts for just a few seconds. A brain-computer interface would reduce this loop duration to a few milliseconds! This would be a great step toward achieving Super Intelligence based on human-AI collaboration.

Later stages of such a roadmap admittedly get weird. In addition to brain-computer interfaces, there are various paths to improving our brains directly through innovations in biology. An eventual further step, which merges both paths, may involve uploading our minds to run on computers directly. This would also be the ultimate d/acc for physical security: protecting ourselves from harm would no longer be a challenging problem of protecting inevitably-squishy human bodies, but rather a much simpler problem of making data backups.

As you can imagine, a lot of philosophical questions might arise. This would require a shift in our deepest ethical grounds. Evolution has taught us to protect our bodies and internal organs at all costs. This has led us to favor non-invasive research and technology over invasive ones. In some aspects, it seems solutions like this are even farthest than AGI and Super Intelligence.

On the other hand, I feel more attracted to this solution than to centralized ones. And I think it is definitely better than just pressing the pause button on AI research. One important point from Vitalik is that all these efforts should be carried on by a security-focused open-source movement, rather than closed and proprietary corporations and venture capital funds. After all, we are talking about literally reading and writing to people's minds.

Conclusions

This was a quick summary of “My Techno-Optimism“ by Vitalik Buterin. I tried to keep it short and focus on the main issues regarding the proposal for AI development in the long run.

I strongly recommend you to read the original article. I skipped a lot of examples and arguments that would help you get a more complete idea of the topic. But also, the article is full of references to research that supports or complements Vitalik’s arguments. I could spend months just clicking on those references.

Of course, this post and Under the Hype are not sponsored by anyone (we are not that big). I’m publishing this summary with my honest opinion about an article and a person I truly admire. I’m doing so because I think it is interesting for my readers.

I hope you have enjoyed this slightly different post. I’d love to hear your take on some of Vitalik’s proposals or about my additions. As always, thanks a lot for staying one more week.

See you next Tuesday!